Санкции и фильтры Google наложенные на сайты

Поисковый робот Google имеет User Agent — Googlebot (Поисковый робот), который является основным роботом, сканирующим содержание страницы для поискового индекса. Помимо него существуют ещё несколько специализированных роботов:

- Googlebot-Mobile — робот, индексирующий сайты для мобильных устройств, ноутбуков

- Google Search Appliance (Google) gsa-crawler — поисковый робот нового аппаратно-программного комплекса Search Appliance,

- Googlebot-Image — робот, сканирующий страницы для индекса картинок,

- Mediapartners-Google — робот, сканирующий контент страницы для определения содержания AdSense,

- Adsbot-Google — робот, сканирующий контент для оценки качества целевых страниц AdWords.

Хотя у меня и есть опыт продвижения сайтов и много я знаю не по теории, а на практике, но с санкциями поисковых систем опыта нет, просто потому, что я их не получал. И чтобы освежить и структурировать всю информацию для себя и для вас друзья, я решил написать самые распространенные типы, как ручных, так и автоматических санкций со стороны поисковой машины Google.

Существует два вида санкций, первые это ручные, когда специальными сотрудниками Гугла проверяются сайты и накладываются руками и автоматические — система сама при помощи разработанных алгоритмов накладывает санкции и также сама их снимает при исправлении проблем сайта.

Сейчас поговорим более подробно о каждом виде санкций и как их избежать или не допустить у себя на сайте.

Ручные санкции Google

Как я и упоминал выше это вид санкций, который накладываются в ручном режиме специальными сотрудниками поисковой системы google, как выбираются сайты для проверки сотрудниками, это могут быть жалобы конкурентов о некачественном продвижении и т.д. Также это сайты, которые определили алгоритмы как нечестно продвигаемые, но с сомнением, то есть 50 на 50, что нужно забанить или же это просто совпадение по алгоритмам, и вот в такой ситуации, в работу включаются люди, которые и решают под санкциями сайт или нет.

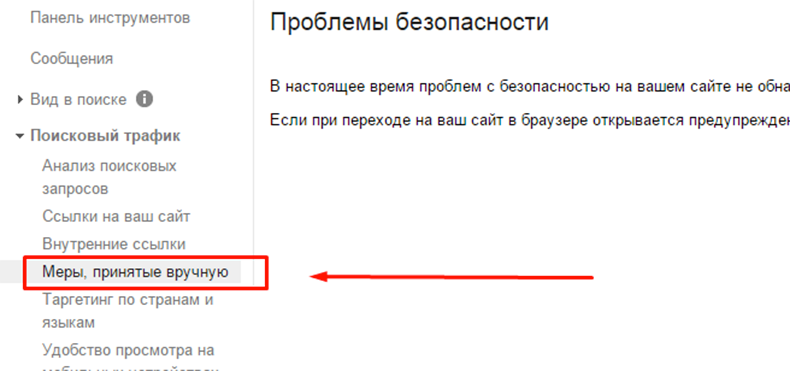

Как бы парадоксально это не звучало, но в ручных санкциях есть плюс в отличие от автоматизированных, заключается он в том, что вам в панель вебмастера приходит уведомление о наложенных санкциях. Посмотреть можно перейдя в раздел Поисковый трафик в раздел «Меры принятые в ручную»

Если вы видите там сообщение о наказании, немедленно принимайте меры по устранению. Как это делать все зависит от индивидуального случая каждого сайта. Моя цель рассказать о симптомах, а ваша цель уже бороться с реалиями, если они вдруг появятся.

Песочница (Sand Box) фильтр от Google

Каждый второй новосозданный сайт попадает под этот фильтр. Но давайте о его сути и принципах наложения. По мнению Гугла, каждый молодой сайт должен пройти этап своего взросления, куда входит его плавное развитие, наращивание стабильных поведенческих факторов, стабильно растущей, естественной ссылочной массы и т.д. Точных сроков по песочнице нет, но есть мнение, что фильтр действует от 4 до 6 месяцев.

Как избежать попадания под этот фильтр, и как правильно развивать сайт, чтобы не иметь проблем с песочницей. Если говорить правильно, то через песочницу проходят все, вот только время сколько ПС будет специально притормаживать ваш сайт от выдачи зависит полностью от вас.

Срок зависит исключительно от качества самого ресурса, а также (что самое важное) запросов, по которым он продвигается. Чем конкурентнее запрос, тем дольше сайту придется сидеть в «песочнице». Как правило, это касается коммерческих ресурсов.

Впрочем, в «песочнице» могут оказаться и немолодые сайты, которые по тем или иным причинам потеряли доверие Google. Это может произойти и из-за неуникального контента, и из-за размещения множества прямых нетематических естественных и продажных ссылок, а также ссылок на сомнительные ресурсы.

Самый эффективный способ побороть быстрее песочницу, это оптимизировать сайт исключительно под СЧ и НЧ, первые 2-4 месяца, а потом уже браться за ВЧ. Но конечно это мое мнение и опыт, как поступите вы решать вам.

Пингвин (Penguin) фильтр Google

Скажу сразу, что фильтр помимо автоматического режима накладывается порой и в ручном режиме. Работа фильтра Пингвин заключается в борьбе с некачественным ссылочным весом сайта, я бы сказал не естественным ссылочным. Об этом фильтре можно писать целую книгу, где рассказывать о кейсах выхода из под пингвина и о том, как правильно строить ссылочную массу в нынешних реалиях. Я разберу лишь пару нюансов, которые вам помогут более понять этого коварного зверька от Гугл.

- Избегайте большого количества ссылок у которого в анкоре прямое вхождение продвигаемого ключа

- Не размещайте ссылки где попало, особо это касается временных ссылок

- Остерегайтесь сквозных ссылок с футера и шапки сайта

- Не ставьте ссылки только на продвигаемые страницы, распределяйте по всему сайту

- Ссылочную массу наращивайте плавно и стабильно, никаких скачков

Панда (Panda) фильтр Google

Данный фильтр был запущен в работу 2011 года, так как переспам содержимого страниц уже не мог продолжаться дальше в таком масштабе. За что накладывается данный фильтр на сайты:

- Неуникальный контент (ворованный)

- За переспам ключей в тексте

- За дубли на сайте

- За нетематическую рекламу на странице сайта

- Излишнее упоминание ключа по всей странице (title, h1, h2, alt, strong, em)

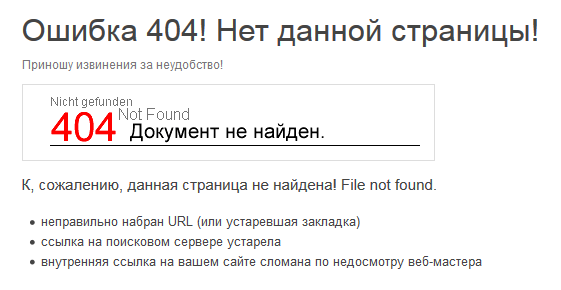

Есть мнение, что наличие битых ссылок на сайте, тех самых 404 также может вызвать Пандочку. Чтобы избежать данного фильтра, все что от вас требуется, провести качественный комплексный аудит вашего сайта. Если нет опыта или понимания, как это сделать, обратитесь к специалистам, которые в этом разбираются.

Дополнительные результаты (Supplementary Results) фильтр Google

- Итак принцип работы данного фильтра является поиск и исключение с основной выдачи Гугла страниц вашего сайта. С чем это может быть связанно?

- Страница не отвечает или не раскрывает запрос под который она продвигается или была написана

- Все пункты признаков фильтра Панда

- Частое перемещение страницы по разным рубрикам, замена url адреса на другой

Как узнать — находится ли Ваш сайт в дополнительных результатах поиска или нет? Это очень просто проверить — введите в поисковик вот это: site:http://www.site.com/& (только не забудьте слово site заменить на свой домен …;-)

Бомбёжка (Bombing) фильтр Google

Данный вид фильтра накладывается на сайт за использование большого количества одних и тех же анкор-текстов в наращивании ссылочной массы. Даже если вы купите 100 ссылок из ста разных доменов, но анкор будет в 80% один и тот же ключ, вы с большой вероятностью получите данный фильтр. Такие ссылки перестают учитываться, обнуляются и ваш сайт по данному запросу попросту не поднимается в выдаче.

Во избежание риска попасть под такой фильтр, рекомендую с умом проводить закупку ссылочной массы, и следить за вашим анкор листом.

Флорида (Florida) фильтр Google

Наказание этим фильтром получают сайты за переоптимизацию, можно сказать, что Панда, это продолжение этого фильтра, так как сам фильтр Флорида был запущен в 2003 году, и уже к 2010 не был в состоянии вести борьбу с некачественным контентом.

В основном наказание следовало за переспам ключами title, h1-h6, а это еще раз подчеркивает, что надо с умом проводить внутреннюю оптимизацию сайта. Ни кто не говорит, что статьи оптимизировать не надо, просто делайте это без фанатизма. И думайте, прежде всего, о своих читателях и об их удобстве.

Битые ссылки (Broken Links) фильтр Google

Фильтр накладывается за большое количество битых 404 ссылок и их активным ростом. У меня был случай, когда разрабы сайта сменили адрес и ссылка ф футере стала отдавать 404 ответ, как результат, каждая новая созданная страница породжала очередную битую ссылку с моего сайта. Благо технический аудит помог выяснить причину и быстро ее устранить.

Дабы у вас не было подобных ситуация проверяем периодически панель вебмастеров, там есть сообщения о битых ссылках, или же можно пройтись по сайту бесплатной программой Xenu, которая укажет на битые ссылки вашего сайта и не только.

Линкопомойка (Links) фильтр Google

Слишком большое количество исходящих ссылок со страниц вашего сайта. То есть когда количество исходящих превышает количество входящих ссылок, что говорит о сайте, как о продажном в плане ссылок или не качественным для людей из-за отсутствия входящей массы ссылочного.

Способы вывода из-под фильтра «Links» очень просты. Если на странице более 25 исходящих ссылок, нужно такие страницы просто удалить или скрыть от индексации. Или же количество входящих в разы меньше исходящих ссылок, данный показатель можно посмотреть в RDS плагине к браузеру или других аналогичных чеккерах.

Скорость загрузки сайта (Page Load Time) фильтр Google

Название говорит само за себя, фильтр накладывается на сайт, который при обращении робота для сканирования страниц, получает очень долго ответ от сервера. Это приводит к понижению вашего сайта в выдаче, для более шустрых сайтов. Сейчас в панели вебмастеров есть сервис проверки скорости загрузки сайта, при чем с рекомендациями по ее улучшению, не стоит пренебрегать этим. Предоставляю вам ссылку на данный сервис PageSpeed Insights.

Это далеко не все фильтры, которые существуют у поисковой системы Google, но я выбрал самые распространенные и те, о которых большинство владельцев сайтов забывает или не знает. Знайте, чтобы избежать попадания под фильтр, нужно знать, за что он может быть наложен. А незнание, это продвижение в слепую, авось пронесет!

Также я не писал о таких вещах, как проверка на валидность вашей верстки, адаптивность сайта, микроразметка и так далее, что также прямо либо косвенно влияет на позиции сайта в выдаче. Надеюсь вы почерпнете полезную информацию для себя и будете осторожнее в плане продвижения своих или чужих ресурсов. Удачного продвижения и ролик по теме фильтров для закрепления знаний)))

Via sozdaj-sam.com & wiki

Created/Updated: 25.05.2018

|

|